Lokakuu 30, 2018 at 14:05

AI can be a great tool for improving cybersecurity. Well, not just a tool, but something many times more complex that can pick up anomalies at a speed faster than any human, learn continuously and become more efficient as it goes along.

People are the weakest link in cybersecurity – How should they be monitored?

We all know that people are the weakest link in cybersecurity. People-based threats are difficult to predict and build defences against, but when faced with such a pattern-recognition and learning task, AI is an obvious answer. AI can effectively learn an employee’s typical behaviour pattern and raise an alert or execute a lock down when it spots something unusual: “That’s not how Tuisku usually types after 16:00”, for example.

Let’s stop there and rephrase.

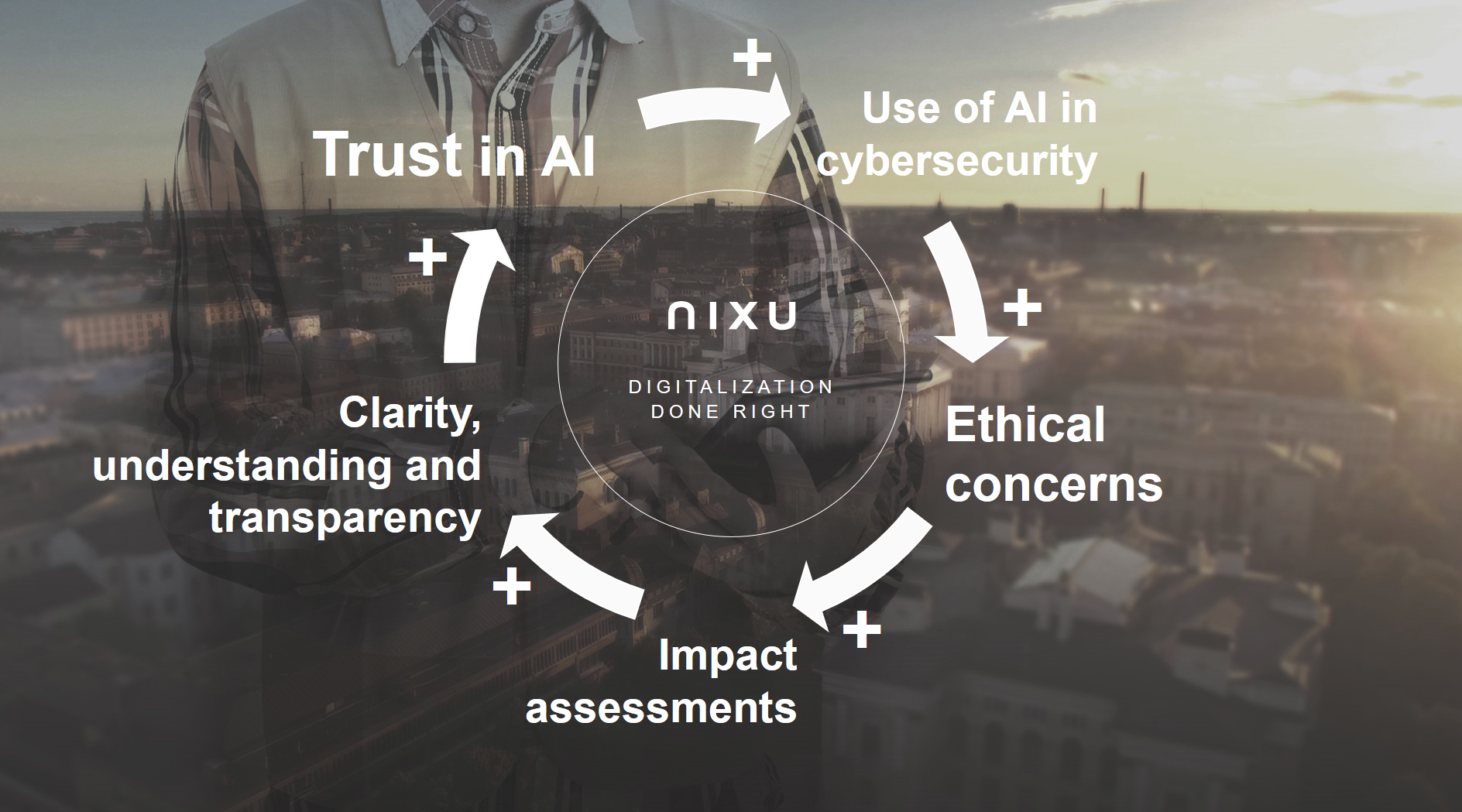

That AI is no more merely picking up cybersecurity threats but monitoring every minute detail of an employee’s life and continuously building an avatar of them. For how long they usually look at certain files, what is their usual typing pattern, when do they come through the door, how they dress or sound like, with whom they lunch. Why would they complain if they have nothing to hide? Let’s remember that we are looking at highly sophisticated and intrusive surveillance capability and we cannot ride on the convenience of it, ignoring the ethical questions that arise. AI and cybersecurity ethics are an increasingly promoted subject for a reason.

Privacy impact assessments of new uses of AI

Nixu’s mission is to keep the digital society running. We already have some tools that help us to assess new technologies that make impactful automated decisions about people – we can (and if we want to comply with law, we most definitely should) make privacy impact assessments about such intrusive uses of AI.

An impact assessment process forces you to examine in a structured way many of the hot topics of AI such as the potential impacts on people, how the AI makes decisions, how to explain its workings to each other, how the AI’s human operators can review and change the decisions it has made and so on.

The resulting understanding, clarity and transparency have huge value beyond the assessment. It builds trust in AI. We desperately need that increased trust in AI-powered cybersecurity solutions today when the idea of AI is still shadowed and its uses blocked by fear, distrust and suspicion.